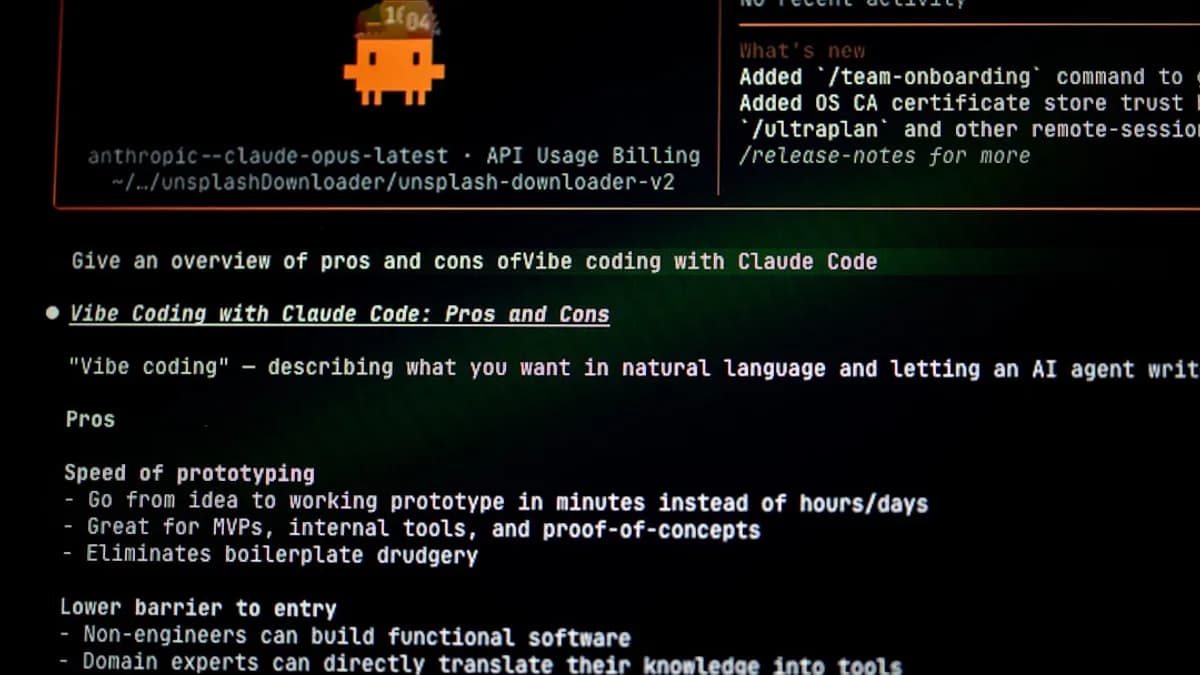

Overview

In the rapidly evolving landscape of generative AI, API pricing and access policies are critical levers for shaping developer adoption, innovation, and commercial viability. Recently, Anthropic—a leading AI company known for its advanced models like Claude—implemented changes to its API pricing and access controls, notably impacting users of third-party tools such as OpenClaw. The most high-profile consequence was the temporary banning of OpenClaw’s creator from accessing Claude, following a shift in pricing for OpenClaw users [Source: TechCrunch]. This event is emblematic of broader API change trends in the AI ecosystem, where providers are recalibrating their offerings in response to increasing demand, security concerns, and competition.

Such changes do not occur in isolation. The context includes Anthropic’s launch of Mythos, an AI model with significant implications for cybersecurity [Source: Wired], and the intensifying competition among AI coding platforms [Source: The Verge]. API policies, pricing, and rate limits are becoming battlegrounds for both user retention and ecosystem control. As AI providers tighten access, developers face new hurdles in integration, cost management, and compliance.

This analysis unpacks Anthropic’s API/pricing change, its rationale, the technical and business impacts, and how developers can navigate the shifting terrain—including exploring alternatives and strategies to mitigate disruption.

What Changed

API Pricing and Access Controls

Anthropic’s decision to alter its API pricing and access mechanisms came amid rapidly rising usage by third-party tools, notably OpenClaw. OpenClaw is a platform that enables developers to interface with large language models, facilitating tasks such as code generation, automation, and knowledge extraction. Before the change, OpenClaw users enjoyed a predictable pricing tier and unrestricted access to Claude’s API. However, as demand surged, Anthropic revised the pricing scheme, introducing new tiers and rate limits.

Key changes included:

- Pricing Tiers: Anthropic moved from a flat-rate, pay-as-you-go model to tiered pricing based on usage volume. Higher tiers now incur substantially increased per-token costs, especially for high-frequency users [Source: TechCrunch].

- Rate Limits: The company imposed stricter rate limits on API calls, reducing the number of requests allowed per minute and per day for non-enterprise accounts.

- Access Restrictions: In response to perceived abuse or excessive usage, Anthropic temporarily banned certain accounts—including OpenClaw’s creator—from accessing the Claude API. This action was described as a measure to enforce fair use and prevent resource exhaustion.

- Feature Segmentation: Some advanced features, such as access to the Mythos model or priority processing, are now reserved for premium tiers or enterprise customers.

Contextual Factors

Anthropic’s changes are not merely about cost recovery. The launch of Mythos—a model that experts warn could be a "hacker’s superweapon"—has heightened concerns about API misuse and cybersecurity risks, motivating stricter controls [Source: Wired]. Moreover, the competitive landscape is heating up, with OpenAI, Google, and others racing to offer more powerful coding tools, driving providers to protect their platforms from overuse, abuse, and unauthorized access [Source: The Verge].

Impact on Developers

Immediate Disruption

The abrupt ban of OpenClaw’s creator and the introduction of new pricing tiers caused confusion and frustration among developers. Many rely on tools like OpenClaw for integrating AI into their workflows, and the disruption forced them to reassess ongoing projects and budgets. For startups and small businesses, the increased costs and rate limits can be prohibitive, potentially stifling innovation and access.

Cost Management Challenges

Tiered pricing models are often opaque, with variable rates depending on usage patterns. Developers now must forecast their token consumption more accurately, negotiate for enterprise access, or risk hitting rate limits in production. This increases administrative overhead and can lead to unexpected bills, especially for teams scaling AI workloads.

Technical Integration Risks

Rate limits and access bans introduce uncertainty into application reliability. Developers building real-time or batch-processing pipelines must now implement failover strategies and usage monitoring to avoid service interruptions. Projects that depend on consistent API access may need architectural changes, such as caching, batching, or multi-provider integration.

Ecosystem Fragmentation

As providers like Anthropic segment features and restrict access, developers face a more fragmented landscape. Integrating multiple AI APIs to maintain feature parity becomes necessary, but this increases complexity and reduces interoperability. For open-source projects, these barriers may slow adoption and force contributors to seek alternative platforms.

Security and Compliance

With Mythos’ launch, the cybersecurity risks associated with powerful AI models are more acute. Providers are tightening API access to prevent malicious use, but this also impacts legitimate developers. The balance between security and usability is shifting, and teams may need to implement additional safeguards to comply with provider policies.

Case Example: OpenClaw’s Creator

The temporary ban of OpenClaw’s creator illustrates the risks of relying on third-party platforms. When API providers change their terms, tool creators can lose access overnight, disrupting hundreds or thousands of downstream users. Developers must now treat API access as a critical dependency, with contingency plans for sudden changes.

Alternatives

Competing AI Platforms

The AI coding wars are intensifying, and developers have several alternative platforms to consider:

- OpenAI (ChatGPT, Codex): OpenAI offers robust APIs for code generation and natural language processing, with transparent pricing and well-defined rate limits. However, OpenAI also reserves advanced features for enterprise customers, and has a history of tightening access during high-demand periods [Source: The Verge].

- Google Gemini: Google’s Gemini API provides advanced code completion and analysis tools, with competitive pricing. Integration with Google Cloud services offers scalability, but rate limits and feature segmentation apply.

- Microsoft GitHub Copilot: Copilot is tightly integrated into developer workflows, but its API is less open and more focused on IDE integration. Pricing is subscription-based, and enterprise tiers provide broader access.

- Meta Llama: Meta’s Llama models are open-source, allowing self-hosting and customization. However, API access through third-party providers may be subject to similar pricing and rate controls.

Open-Source Solutions

- Self-Hosted Models: Developers can deploy open-source models like Llama or Mistral on their own infrastructure, avoiding API pricing and rate limits. This requires technical expertise and hardware investment but grants full control over usage.

- Hybrid Architectures: Teams may combine provider APIs with self-hosted models, using the API for specialized tasks and local models for bulk processing.

Network and Security Tools

- Little Snitch for Linux: The launch of Little Snitch’s network monitoring tools on Linux provides new ways to track and control outbound connections, helping developers manage API calls and detect unauthorized access [Source: The Verge]. While Little Snitch is not a full security tool on Linux, it offers granular visibility into application behavior.

API Aggregators

- Multi-Provider Platforms: Tools like OpenClaw, while disrupted by Anthropic’s changes, may pivot to aggregate multiple APIs, offering fallback options and usage tracking across providers.

Comparative Table

| Provider | Pricing Model | Rate Limits | Feature Segmentation | Self-Hosting | Security Controls |

|---|---|---|---|---|---|

| Anthropic Claude | Tiered (variable) | Strict | Yes | No | High (Mythos) |

| OpenAI | Transparent (fixed) | Moderate | Yes | No | Moderate |

| Google Gemini | Competitive | Moderate | Yes | No | Moderate |

| GitHub Copilot | Subscription | Low | Yes | No | Moderate |

| Meta Llama | Free/Open-source | N/A | No | Yes | Customizable |

Recommendations

1. Diversify API Dependencies

Developers should avoid single-provider lock-in. Integrate multiple AI APIs or maintain the capability to switch providers quickly. Use abstraction layers in your codebase to facilitate rapid migration.

2. Monitor Usage and Costs

Implement real-time monitoring of API usage and costs. Set alerts for approaching rate limits and budget thresholds. Use network monitoring tools like Little Snitch (now available on Linux) to gain visibility into outbound API calls.

3. Plan for Rate Limit Failover

Architect applications with built-in failover for rate limits and access bans. This might include caching responses, batching requests, or switching to alternative APIs when limits are reached.

4. Consider Self-Hosting

For teams with technical capacity, self-host open-source models to avoid dependency on external API changes. This is especially viable for bulk processing tasks where latency and scalability are less critical.

5. Negotiate Enterprise Access

If usage is high or mission-critical, engage providers for enterprise tiers. Negotiate for custom rate limits, pricing, and feature access. Document all agreements and SLAs to protect against sudden changes.

6. Stay Informed on Policy Changes

Subscribe to provider newsletters, monitor developer forums, and participate in early-access programs. Rapid API changes are common, and proactive awareness can prevent disruptions.

7. Address Security and Compliance

As AI models like Mythos introduce new cybersecurity risks, implement robust access controls and audit trails. Ensure compliance with provider terms and industry regulations.

8. Support Open-Source Ecosystems

Contribute to or adopt open-source tools that reduce dependency on proprietary APIs. Encourage community-driven development of AI models and network monitoring solutions.

9. Communicate with Users

If you build third-party tools (like OpenClaw), maintain transparent communication with users about API changes, disruptions, and contingency plans. Build trust by preparing for rapid adaptation.

Conclusion

Anthropic’s API/pricing change—culminating in the temporary ban of OpenClaw’s creator—highlights the volatility of the AI service landscape. As providers tighten controls in response to demand, competition, and security concerns, developers must adopt resilient strategies for integration, cost management, and compliance. Alternatives exist, but each comes with trade-offs in usability, pricing, and technical overhead. The best defense against sudden API changes is diversification, proactive monitoring, and engagement with both proprietary and open-source ecosystems. In an environment where access can change overnight, agility is the developer’s greatest asset.

References: