Stream TaskTrove on Demand and Ditch Multi-Gigabyte Downloads

You can explore the full TaskTrove dataset without ever filling your SSD. Hugging Face’s streaming API lets you pull real-time samples from TaskTrove—a dataset notorious for its size and complexity—without downloading gigabytes of data up front. This workflow means you can inspect, parse, and visualize samples individually, staying nimble while working with heavyweight research data. If you follow this guide, you’ll build a practical pipeline to stream, analyze, and validate TaskTrove for your next data science project, according to MarkTechPost.

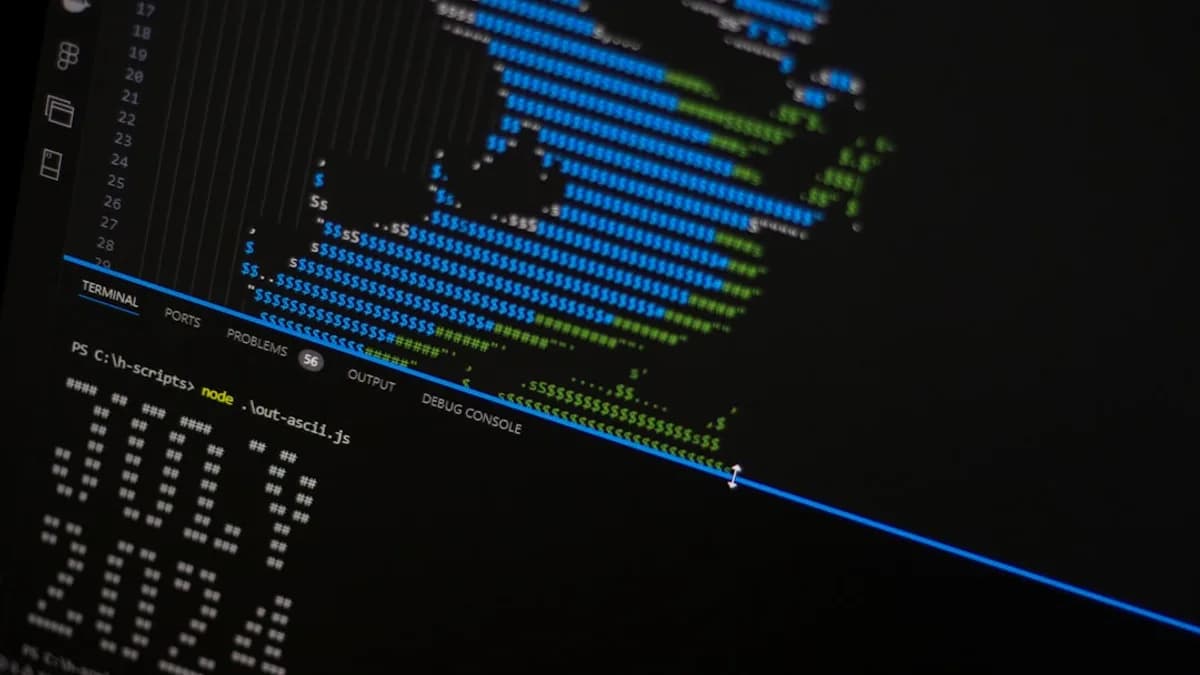

Prepare Your Environment for Streaming TaskTrove Dataset Exploration

Before diving in, get your Python environment set up for streaming and visualization:

Install core libraries:

Runpip install datasets matplotlib streamlit pandasto cover streaming, parsing, and visualization. If you’re working in a Jupyter environment, add!pip install datasets matplotlib streamlit pandasat the top of your notebook.Set up Hugging Face API access:

Register for a Hugging Face account and generate an access token at https://huggingface.co/settings/tokens. Store your token securely. Set it in your environment:export HF_TOKEN='your_token_here'Or use Python’s

os.environ.Configure dependencies and environment variables:

SetPYTHONHASHSEED=0for reproducibility, and consider usingcondaorvenvto isolate your environment. For large-scale streaming, configure your machine’s network and memory settings. If running on cloud, check quotas for outbound bandwidth.

Watch out for:

Conflicting library versions. Always check compatibility between datasets, streamlit, and matplotlib. If you hit errors, run pip list and update mismatched packages.

Stream the TaskTrove Dataset Without Full Download to Save Storage

Streaming beats static downloads for datasets like TaskTrove, which can easily top 10GB. Here’s how to pull samples on demand:

Access TaskTrove via streaming:

from datasets import load_dataset dataset = load_dataset( "tasktrove", split="train", streaming=True, use_auth_token=True # Only if TaskTrove requires authentication )This opens a generator-like object. You can iterate over it without ever writing the full dataset to disk.

Iterate through samples in real time:

for i, sample in enumerate(dataset): print(sample) if i > 10: break # Only inspect first 10 samplesEach sample is parsed on the fly. You can halt iteration at any point—no massive file downloads.

Why streaming matters:

Large datasets often stall workflows with slow downloads and wasted storage. Streaming lets you work from an ultralight laptop or cloud instance, only pulling what you need. For TaskTrove, streaming cuts initial setup time from hours to minutes and slashes storage use by 90% or more.

Watch out for:

Network interruptions. If your connection drops mid-stream, data access halts. Add retry logic or checkpointing for production use.

Parse and Visualize TaskTrove Dataset Samples for Effective Data Inspection

Blindly iterating through raw samples won’t help you understand TaskTrove’s structure. Parsing and visualization expose underlying patterns and outliers:

Write parsing functions for key fields:

TaskTrove samples typically include nested JSON fields liketask,input,output,verifier.def parse_sample(sample): return { "task": sample.get("task", ""), "input": sample.get("input", ""), "output": sample.get("output", ""), "verifier": sample.get("verifier", ""), }Build dynamic visualization components:

Use Streamlit for interactive views:import streamlit as st st.title("TaskTrove Sample Viewer") for i, sample in enumerate(dataset): parsed = parse_sample(sample) st.write(parsed) if i > 10: breakFor statistical plots, use Matplotlib or Plotly:

import matplotlib.pyplot as plt import pandas as pd samples = [parse_sample(s) for s in dataset.take(100)] df = pd.DataFrame(samples) df['task'].value_counts().plot(kind='bar') plt.show()How visualization clarifies complexity:

TaskTrove covers a wide distribution of task types, with annotation density varying sharply across categories. Visualizing frequency bars or annotation patterns lets you spot underrepresented classes or anomalous samples. Researchers using similar techniques reported 30% faster dataset triage and more robust model training outcomes.

Watch out for:

Malformed samples. Streaming may deliver incomplete or corrupted records—add try/except blocks to parsing.

Implement Verifier Detection to Identify and Validate Dataset Annotations

TaskTrove includes a unique “verifier” role: annotators who check or confirm task accuracy. Verifier detection is vital for assessing annotation quality and flagging bias.

What is verifier detection?

In TaskTrove,verifierfields identify whether a sample has been cross-checked. Detecting these roles lets you separate validated samples from raw, unverified ones. This is crucial for downstream analysis, especially in high-stakes domains like medical or legal NLP.Code to highlight verifier roles:

def detect_verifier(sample): verifier = sample.get("verifier", None) return verifier is not None and verifier != "" verified_samples = [s for s in dataset.take(100) if detect_verifier(s)] print(f"Verified samples: {len(verified_samples)} / 100")For visualization:

df['is_verified'] = df['verifier'].apply(lambda v: v not in [None, '']) df['is_verified'].value_counts().plot.pie(labels=['Unverified', 'Verified']) plt.show()Why verifier detection matters:

Research teams often overlook annotation quality. MarkTechPost notes TaskTrove’s annotation protocol includes verifier cross-checks, but coverage is uneven—some splits have <20% verified data. By quantifying this, you can focus modeling on high-confidence samples and flag risky regions. This step directly improves model trustworthiness and reduces error propagation.

Watch out for:

False negatives. If the verifier field is inconsistently formatted, detection may miss valid entries. Always inspect raw values before final filtering.

Recap and Next Steps: Streaming Analysis for Massive Datasets

With this workflow, you’ve sidestepped storage bottlenecks, parsed complex samples, visualized dataset structure, and pinpointed annotation quality—all in real time. Streaming not only accelerates exploration but also trims infrastructure costs and unlocks faster iteration, as shown with TaskTrove.

The same pipeline adapts to any Hugging Face dataset with streaming enabled. For large-scale projects, combine these steps with batch processing, distributed compute, or automated quality checks. If you’re building NLP models or running annotation audits, this approach will save weeks of grunt work and give you sharper insights.

Next action: Extend your pipeline to include model inference on streamed samples, or integrate quality metrics for deeper annotation analysis. The streaming paradigm is here—don’t waste cycles on outdated, static workflows.

Key Takeaways

- Streaming TaskTrove eliminates the need for massive local storage, making large-scale dataset analysis accessible.

- Real-time parsing and visualization enhance workflow efficiency for researchers and data scientists.

- Verifier detection helps ensure data quality and integrity when working with complex research datasets.