Introduction to ChatGPT’s Images 2.0 Model and Its Text Generation Capabilities

OpenAI’s new Images 2.0 model, built into ChatGPT, can now make pictures with clear, readable text. That’s a big step forward. The model doesn’t just draw images—it writes words inside them that look almost as good as those made by humans. This is a big surprise since older AI models often messed up letters, spacing, and even simple sentences. OpenAI also says the update helps the model make charts, diagrams, and pull info from the web to make images smarter and more useful [Source: Google News]. This means you can ask ChatGPT to make a chart about stock prices, a diagram for a science project, or a poster with neat, correct words. People who use AI to make graphics and visual content are paying close attention to these changes.

Technical Advancements Behind Images 2.0 Enhancing Text Accuracy

Images 2.0 got better because OpenAI rebuilt how the model understands and makes images with text. Older models, like DALL-E 2, often made letters that looked weird or mashed together. Sometimes, you’d ask for “OpenAI” and get “0penA1” or random symbols. The new model uses smarter training. It learned from millions of real images with clear text, so now it knows what letters and words should look like.

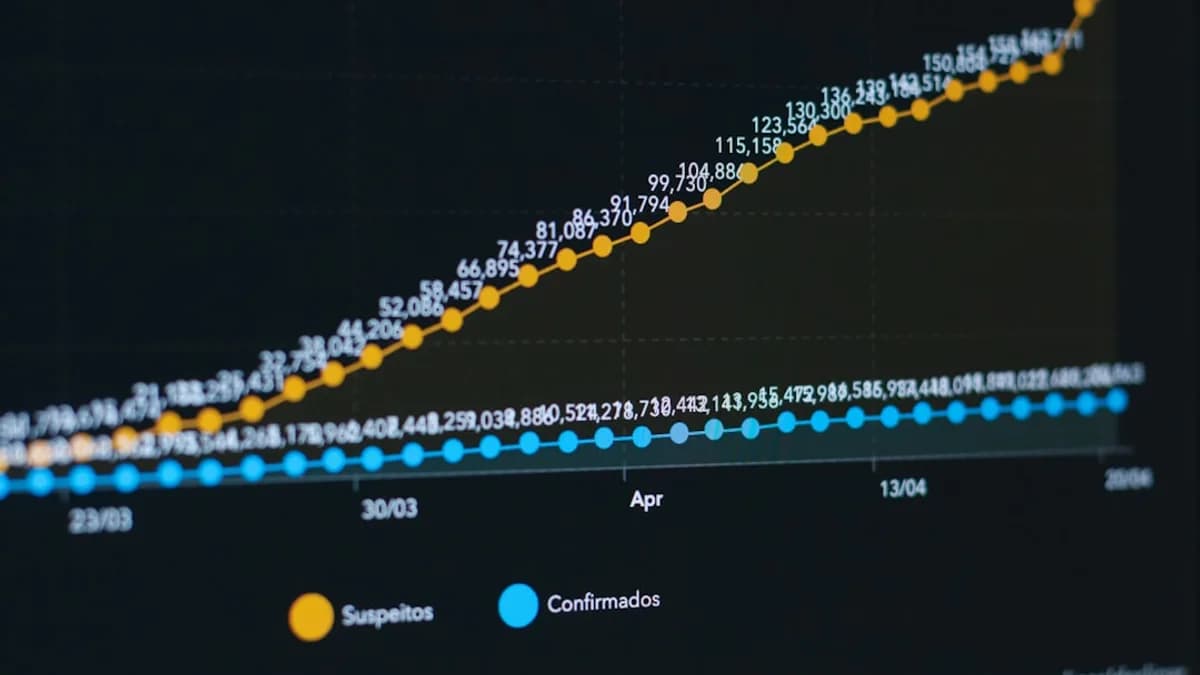

The model also improved how it draws charts and diagrams. It can read data, sort it, and turn it into visuals that make sense—like bar graphs with labeled axes or flowcharts with readable boxes. Before, AI made messy charts with mixed-up numbers and labels. Now, Images 2.0 can handle complex layouts and keep everything in order.

One of the biggest changes is web integration. The model can pull fresh information from the internet to make images. So, if you ask for a chart of today’s weather, it can grab current data and put it into a nice-looking graph. This helps the model stay up-to-date and makes its results more accurate and relevant [Source: Google News]. The mix of better training and live web data means Images 2.0 is not just guessing—it’s showing real facts in readable ways.

Comparing Images 2.0 with Previous Versions and Competitors’ Technologies

Images 2.0 fixes old problems that have bugged AI image tools for years. DALL-E 2 and earlier versions struggled with text. They’d make pictures with strange or broken letters. Even simple words often got jumbled. This made them hard to use for posters, infographics, or anything that needed clear writing.

Google’s AI tools, like Imagen and Gemini, have also tried to solve the problem. Google has worked on making diagrams and charts, but their models sometimes miss details or make errors with text placement. Both OpenAI and Google have been racing to get text right. But Images 2.0 seems to leap ahead. It can make pictures with sharp, readable words, and it handles tricky diagrams better than before [Source: Google News].

The update gives OpenAI a strong edge in the AI image market. More people want tools that can make not just pretty pictures, but useful graphics with correct words. This matters for teachers, business people, and anyone who needs to share info visually. OpenAI’s move also puts pressure on rivals to catch up. As AI becomes more common in design, marketing, and data work, the ability to make images with accurate text will help OpenAI stand out.

Implications of Enhanced Text Generation in AI-Generated Images for Users and Industries

Clear text in AI-made images opens new doors for many people. Teachers can ask ChatGPT to make posters for lessons or diagrams for science classes. The text will be easy to read, so students don’t get confused. Analysts can create charts with numbers and labels that make sense, speeding up reports and saving time.

For marketers, this means faster creation of ads, social posts, or flyers. Instead of spending hours on design software, they can ask ChatGPT for a quick graphic with the right words and info. Writers and content creators can use the tool to make illustrations for articles, slides for talks, or visuals for blogs. Accurate text helps them trust the output and use it without lots of editing.

But there are challenges. If the AI pulls wrong facts from the web, it can make charts or images that spread mistakes. For example, if the internet source is outdated or wrong, the image will show that error. This could lead to misinformation, especially in news, education, or business. People will need to double-check facts before sharing AI-made images.

Another risk is that the model might misuse text or create confusing layouts if the prompts aren’t clear. For industries like finance or healthcare, where data must be perfect, this could cause trouble. Companies will need to set rules and check AI-made images before using them in serious settings.

Still, the upgrade means that more people can use AI to make visual content. It’s cheaper and faster than hiring designers for simple jobs. Small businesses, students, and anyone with a smartphone can make graphics that look professional. This levels the playing field and lets more voices be heard. We’re likely to see a wave of new content, powered by AI, in classrooms, offices, and online.

Future Prospects: What Images 2.0 Means for the Evolution of Multimodal AI Models

With Images 2.0, AI tools are getting closer to understanding the world like humans do. Being able to make both pictures and readable text means the models are more “multimodal”—they can use words and visuals together. This helps them answer questions, explain ideas, and even help with tasks like making study guides or business pitches.

The web integration is a big deal. Now, ChatGPT can grab live info and turn it into useful images. This could help with real-time news, weather updates, or tracking events as they happen. As more AI models use web data, they’ll get smarter and more helpful.

Looking ahead, we might see AI tools that make whole documents—mixing charts, diagrams, and written explanations. They could help doctors with medical reports, students with homework, or businesses with presentations. If OpenAI keeps improving text and visual accuracy, other companies will step up their own models. This could spark new ideas about how humans and AI work together.

Conclusion: Evaluating the Significance of ChatGPT’s Images 2.0 in AI Innovation

ChatGPT’s Images 2.0 makes a big leap in how AI creates pictures with readable, correct text. The update fixes old problems, adds smart web integration, and makes charts and diagrams more useful [Source: Google News]. OpenAI now has a clear advantage in the race to make AI tools that help people work, learn, and share information.

The ability to make images with sharp words means more people can use AI for real jobs—not just for fun art, but for teaching, marketing, and business. As the technology keeps improving, we’ll see more creative and practical uses. But users should still check facts and be careful with AI-made images.

OpenAI’s move shows that AI can do more than just draw—it can help people make sense of the world. The next steps will likely bring even smarter, more helpful tools. If you use AI to make charts, posters, or diagrams, now is a good time to try Images 2.0 and see how it can help you work faster and smarter.

Why It Matters

- Images 2.0 lets users create graphics with correct, human-like text for posters and diagrams.

- The model’s improved ability to generate readable charts and diagrams boosts productivity and accuracy.

- Web integration means AI-generated visuals can use up-to-date information, making content more relevant.