Introduction: Understanding the Rising Tide of AI Resistance

From headline-grabbing lawsuits against AI companies to viral protests by artists and coders, resistance to artificial intelligence is no longer a niche concern—it’s an undercurrent shaping the future of technology. As AI systems rapidly integrate into everything from creative industries to healthcare, a swelling chorus of critics is demanding a pause, or at least a more thoughtful approach. Recent online debates, like those dissected in Steph Vee’s blog and the spirited exchanges on Hacker News, reveal that skepticism isn’t just growing—it’s evolving, drawing in technologists, ethicists, and ordinary users alike [Source: Source]. What’s behind this groundswell of anti-AI sentiment, and what does it mean for the trajectory of innovation? To answer that, we must unpack the layers of resistance and examine where legitimate caution ends and reactionary fear begins.

Key Drivers Behind the Growing Anti-AI Sentiment

The backlash against AI is driven by a complex mix of ethical, social, and economic anxieties. One of the most persistent concerns is the potential for AI systems to entrench or amplify bias. When algorithmic decisions—whether in hiring, loan approvals, or criminal justice—reflect the prejudices of their training data, they can perpetuate existing inequalities on an unprecedented scale. While AI developers tout technical fixes, critics argue that the underlying issues are deeply societal, not just computational.

Privacy is another flashpoint. As AI models hoover up vast quantities of personal data to improve their performance, fears mount about surveillance, data breaches, and the erosion of individual autonomy. The Cambridge Analytica scandal and more recent incidents involving facial recognition highlight how easily personal information can be weaponized, often without meaningful consent or oversight.

Job displacement looms large as well. Unlike earlier waves of automation that primarily affected manual labor, AI threatens white-collar professions—legal research, journalism, graphic design—raising questions about the future of work and social stability. This anxiety is not unfounded: McKinsey estimates that by 2030, up to 800 million jobs could be automated worldwide, with AI playing a central role.

Layered atop these concerns is a broader existential unease about ceding human agency to machines. When AI systems make decisions that even their creators cannot fully explain, trust erodes. This “black box” problem isn’t just technical; it’s psychological. People want to understand—and, when necessary, challenge—the logic behind consequential choices. The opacity of many AI models makes this difficult, fueling suspicion and resistance.

Finally, cultural apprehensions about the pace of technological change play a subtle but significant role. Rapid AI adoption can feel like a forced experiment, with society as the unwitting guinea pig. Unlike the slow, generational transitions of the past (think the spread of electricity or the automobile), AI is rolling out at breakneck speed, sometimes outpacing our ability to adapt laws, norms, and ethics. This sense of dislocation—of technology running ahead of society—adds fuel to the fire.

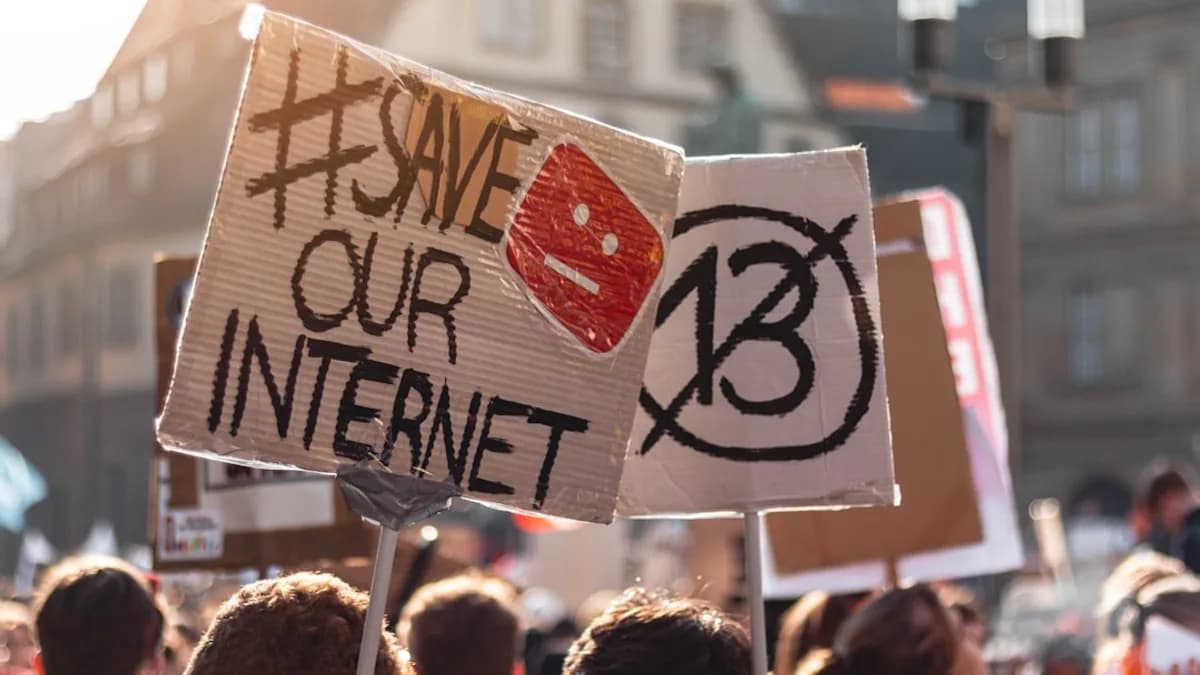

Examining Notable Instances of AI Resistance in Recent Discourse

The current wave of AI resistance is as much a product of grassroots discussion as it is of top-down policy debates. Steph Vee’s recent blog post catalogs a variety of anti-AI sentiments percolating through tech-savvy circles, with Hacker News serving as a real-time barometer for community attitudes [Source: Source]. The 242 comments and 258 upvotes on the Hacker News thread are telling—not just in volume, but in the diversity and sophistication of the arguments raised.

Some critiques are deeply technical. Contributors dissect the limitations of current large language models (LLMs), highlighting hallucinations, lack of contextual understanding, and the brittleness of model outputs. Others focus on the ethical landscape, questioning whether AI-generated art or code is a form of theft or plagiarism, especially when trained on unlicensed data. These voices echo recent legal disputes over AI’s use of copyrighted works in training datasets.

A notable thread in the discussion, both on the blog and in the comments, is emotional: a sense of loss, whether of professional pride, creative uniqueness, or simply the joy of human endeavor. For artists, writers, and coders, the encroachment of AI can feel like an existential threat, cheapening the value of human expression.

What unites these perspectives is a recognition that AI is not just a tool, but a force with the potential to reshape—and perhaps destabilize—entire sectors. The fact that skepticism is coming not just from policy wonks or ethicists, but from the very technologists who build and deploy these systems, signals a maturing debate. This is not Luddite rejectionism; it’s a call for sobriety, reflection, and accountability.

Implications of AI Resistance for Technology Development and Adoption

The growing resistance to AI is not merely rhetorical; it has tangible consequences for the industry’s evolution. Public skepticism can directly influence research funding, as governments and private backers grow wary of supporting technologies perceived as risky or socially destabilizing. In the European Union, for instance, strong public and political concern has already translated into some of the world’s strictest AI regulations, including the recent AI Act.

Industry adoption also faces headwinds. Companies that once raced to integrate AI into their products now weigh the reputational and ethical risks more carefully. Consider Google’s decision to delay or limit certain AI features due to accuracy and fairness concerns—a sign that even tech giants feel the pressure to slow down and get it right. This recalibration may be healthy: resistance can serve as a check on reckless deployment, forcing firms to prioritize safety, transparency, and user consent.

Importantly, public opinion is emerging as a powerful lever in shaping the governance of AI. Regulatory frameworks are, in part, a response to popular sentiment; lawmakers rarely act in a vacuum. As resistance gains traction, it is likely to drive greater demand for explainability, robust audit trails, and mechanisms for redress when AI systems go awry. In this sense, anti-AI sentiment is not just an obstacle—it’s a catalyst for more socially responsive technology.

From a historical perspective, it’s worth recalling that every major technological revolution—from the industrial loom to the internet—has provoked backlash. What distinguishes the current moment is the speed and scope of AI’s encroachment into intimate domains of life, and the unprecedented potential for harm at scale.

Balancing Innovation and Caution: Navigating the Path Forward with AI

If AI is to fulfill its promise without succumbing to its perils, the industry must chart a path that marries innovation with caution. Responsible AI development starts with transparency. This means not only opening the black box of algorithmic decision-making, but also clearly communicating the limitations and uncertainties inherent in AI systems. Open-source initiatives, third-party audits, and standardized benchmarks can all contribute to a more accountable ecosystem.

Inclusivity is equally crucial. Too often, the voices shaping AI are concentrated in a handful of tech hubs, disconnected from the communities most affected by these technologies. Engaging a broader spectrum of stakeholders—from labor unions and disability advocates to artists and end-users—can unearth blind spots and lead to fairer, more robust solutions.

Ethical guidelines should be more than window dressing. They need teeth: enforceable standards, legal liability for harm, and clear avenues for recourse. The recent moves by the White House and the EU to outline AI “bill of rights” frameworks are steps in the right direction, but they must be backed by meaningful enforcement and continuous dialogue with civil society.

Finally, fostering informed public debate is key. Misinformation and fear-mongering can hinder progress as much as reckless optimism. The challenge is to bridge the gap between AI proponents—who see the technology’s transformative potential—and skeptics, who rightly demand safeguards. This means demystifying AI, rooting discussions in evidence rather than hype, and creating spaces for constructive engagement.

Conclusion: Reflecting on the Future of AI Amidst Resistance

The rise of AI resistance is neither a fleeting panic nor a simple reactionary spasm—it’s a vital check on unchecked technological enthusiasm. Engaging with, rather than dismissing, these concerns is essential if AI is to serve rather than subvert human values. The path forward requires humility from technologists, vigilance from regulators, and active participation from the public. Only by acknowledging the legitimacy of resistance can we hope to build AI systems that are not just innovative, but genuinely trustworthy. For those invested in the future of technology, the message is clear: shaping a just and ethical AI era is everyone’s responsibility.